Hey

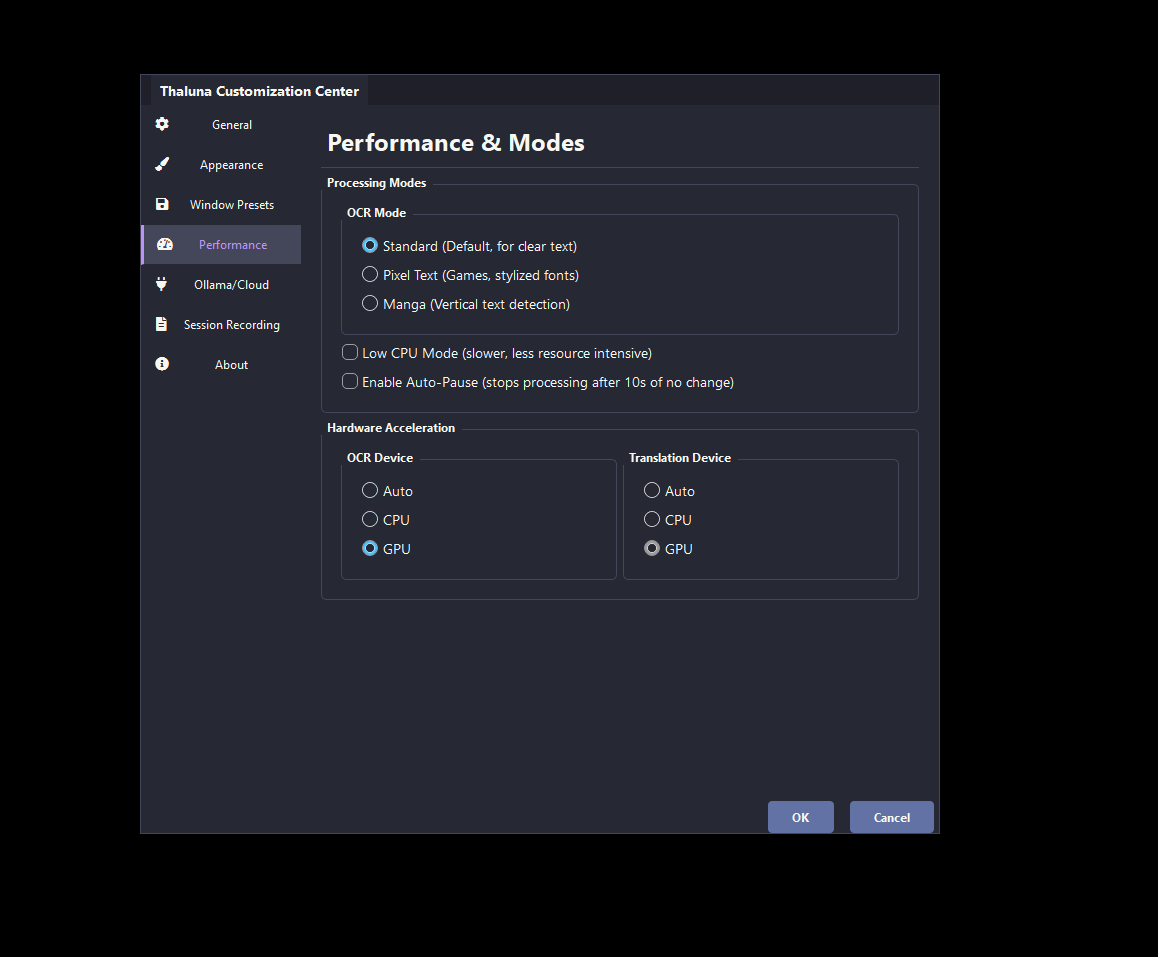

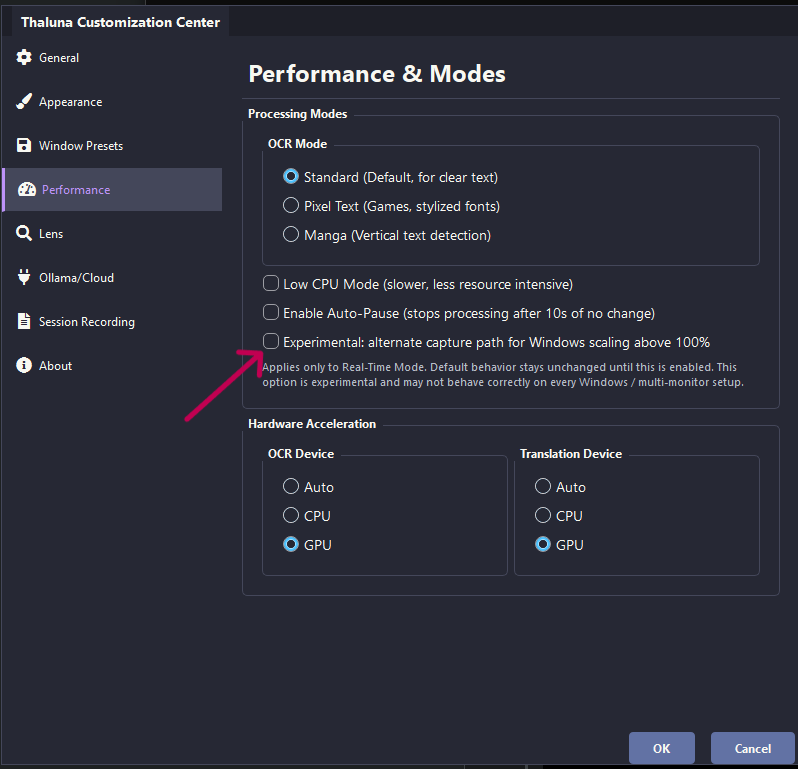

This is expected on that type of laptop — it uses integrated graphics (no dedicated GPU), so everything runs on the CPU.

That can cause lag or stuttering during startup, especially while models are loading.

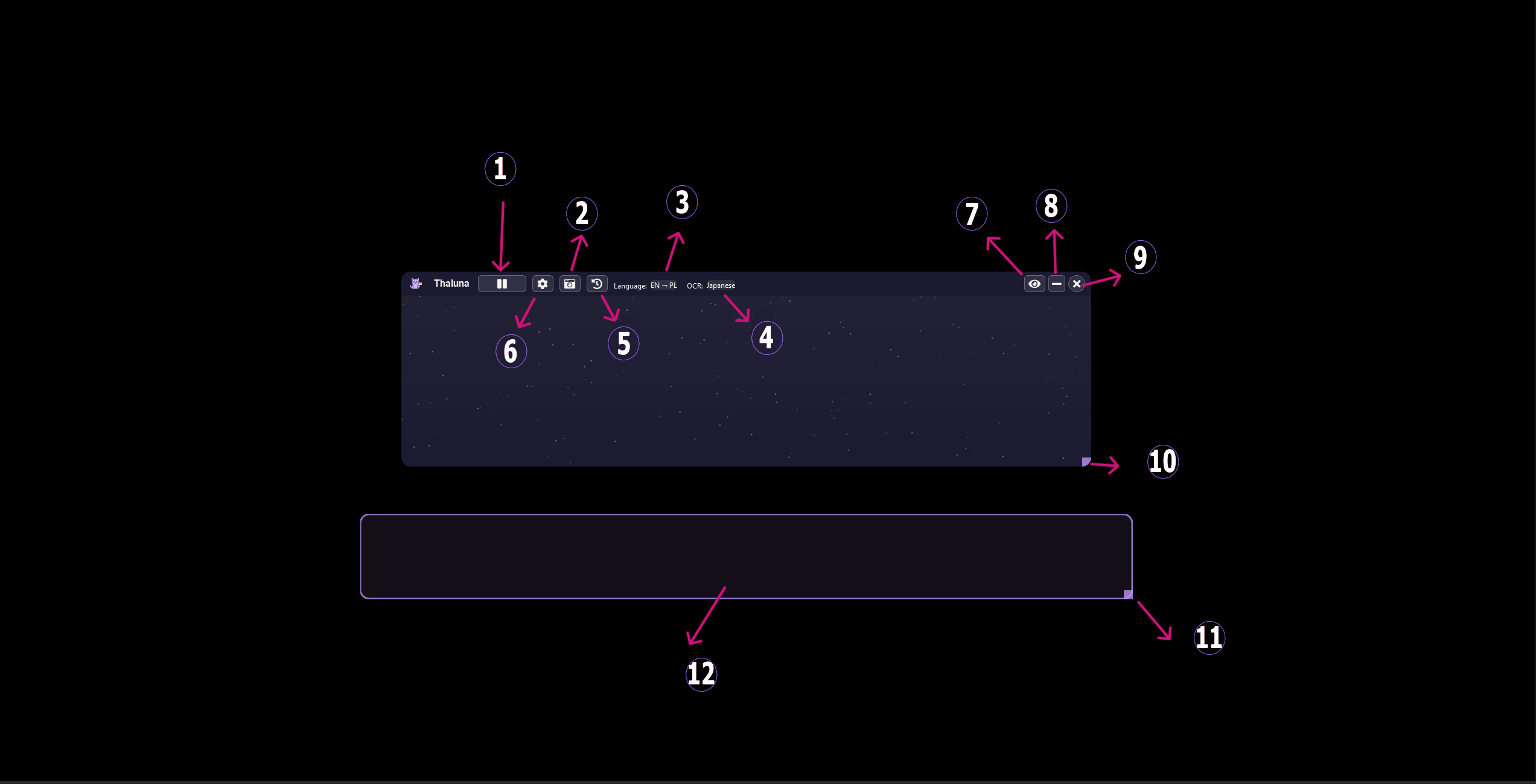

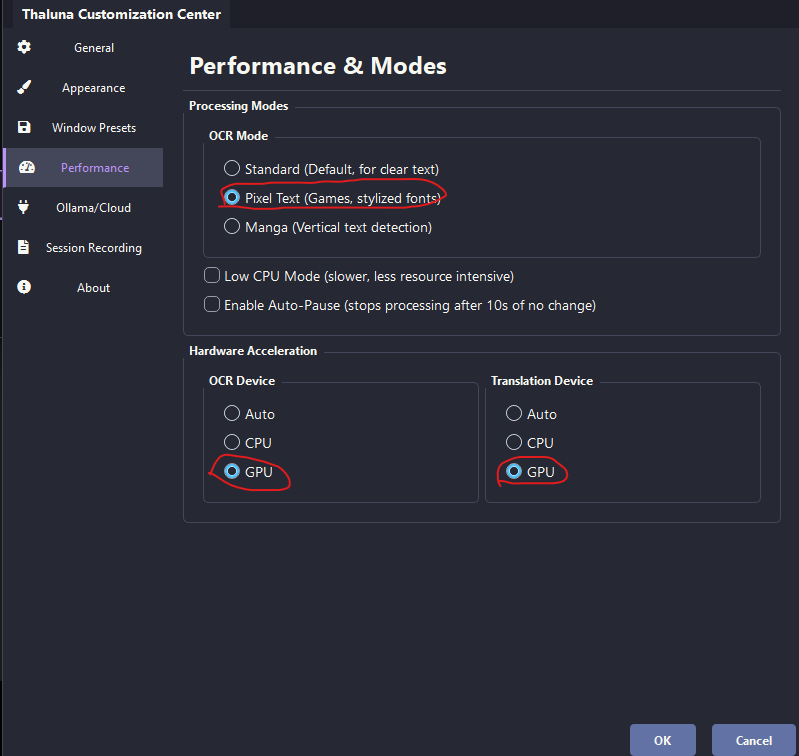

A typical setup for this hardware is:

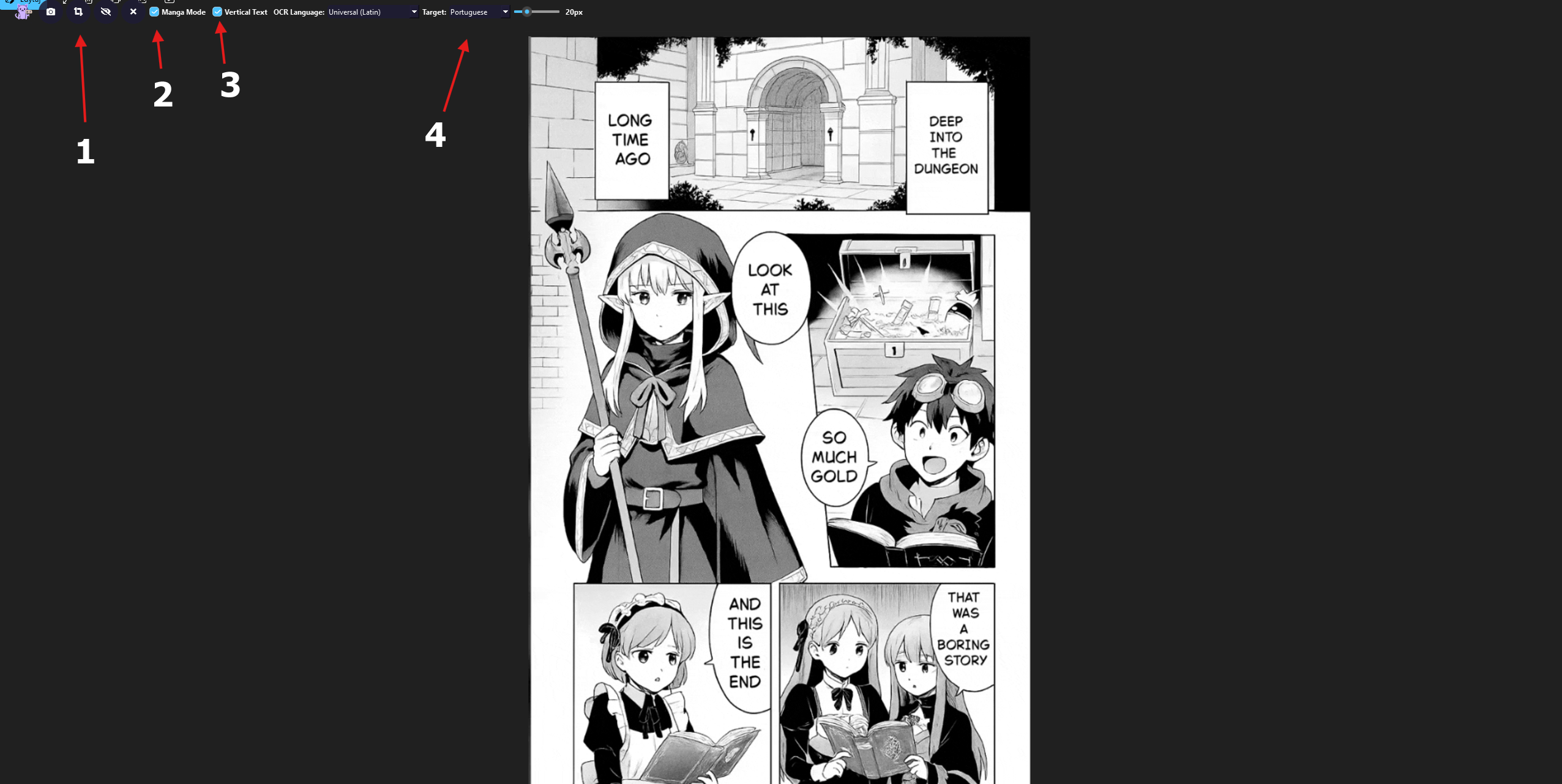

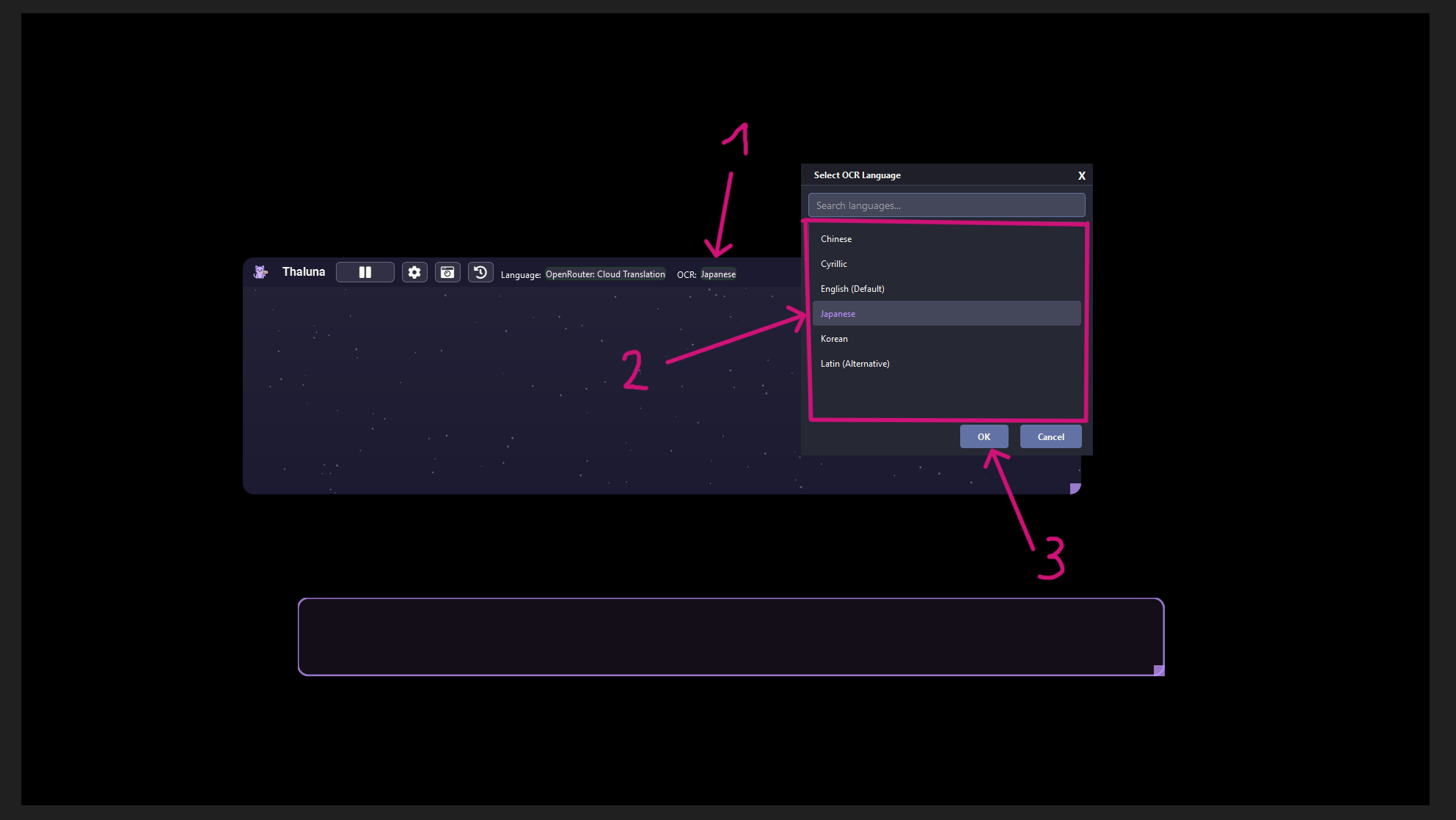

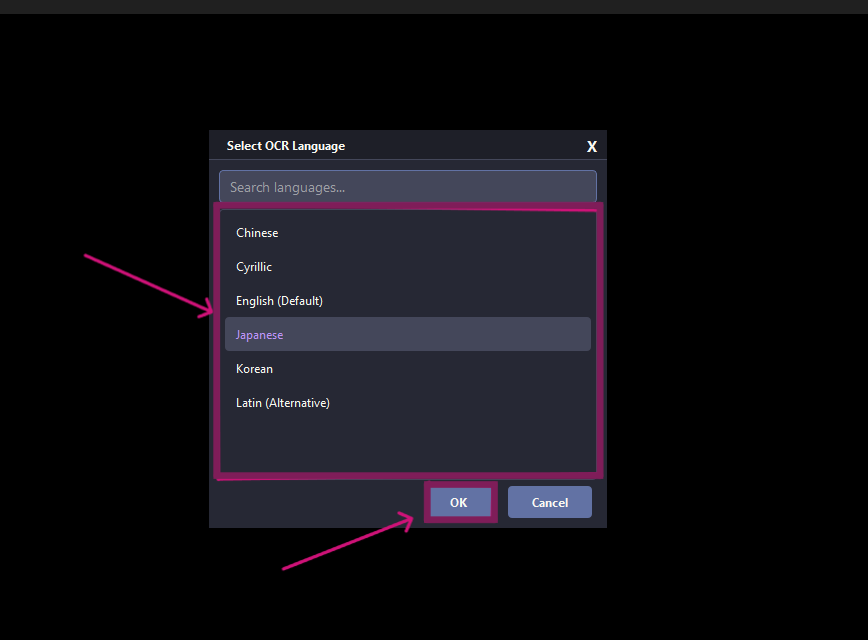

- OCR Device → CPU

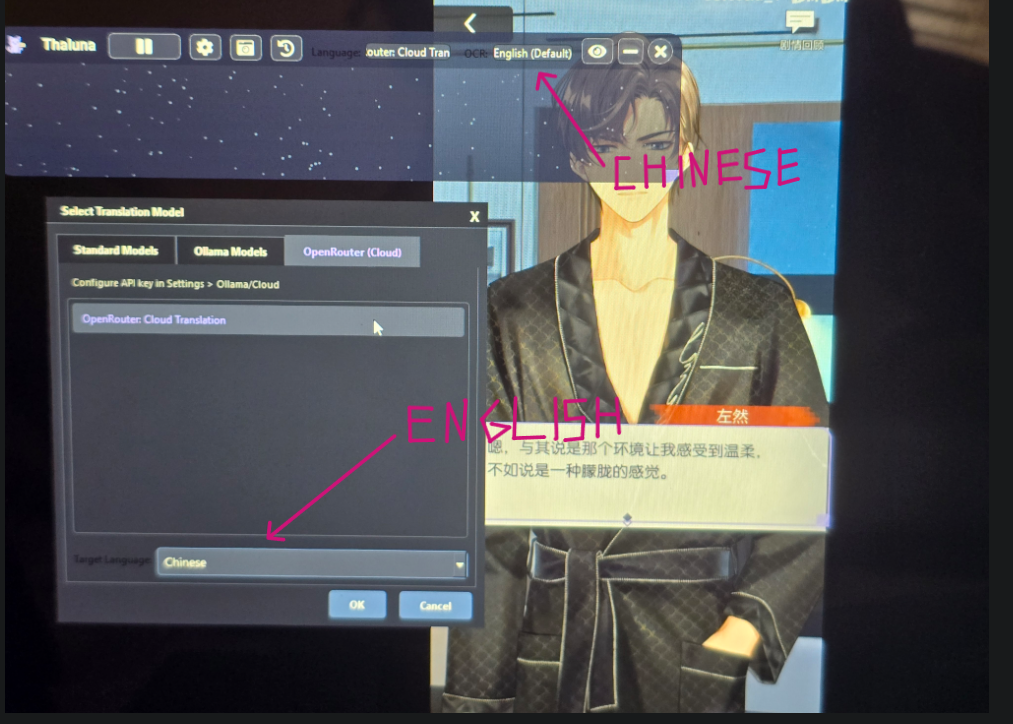

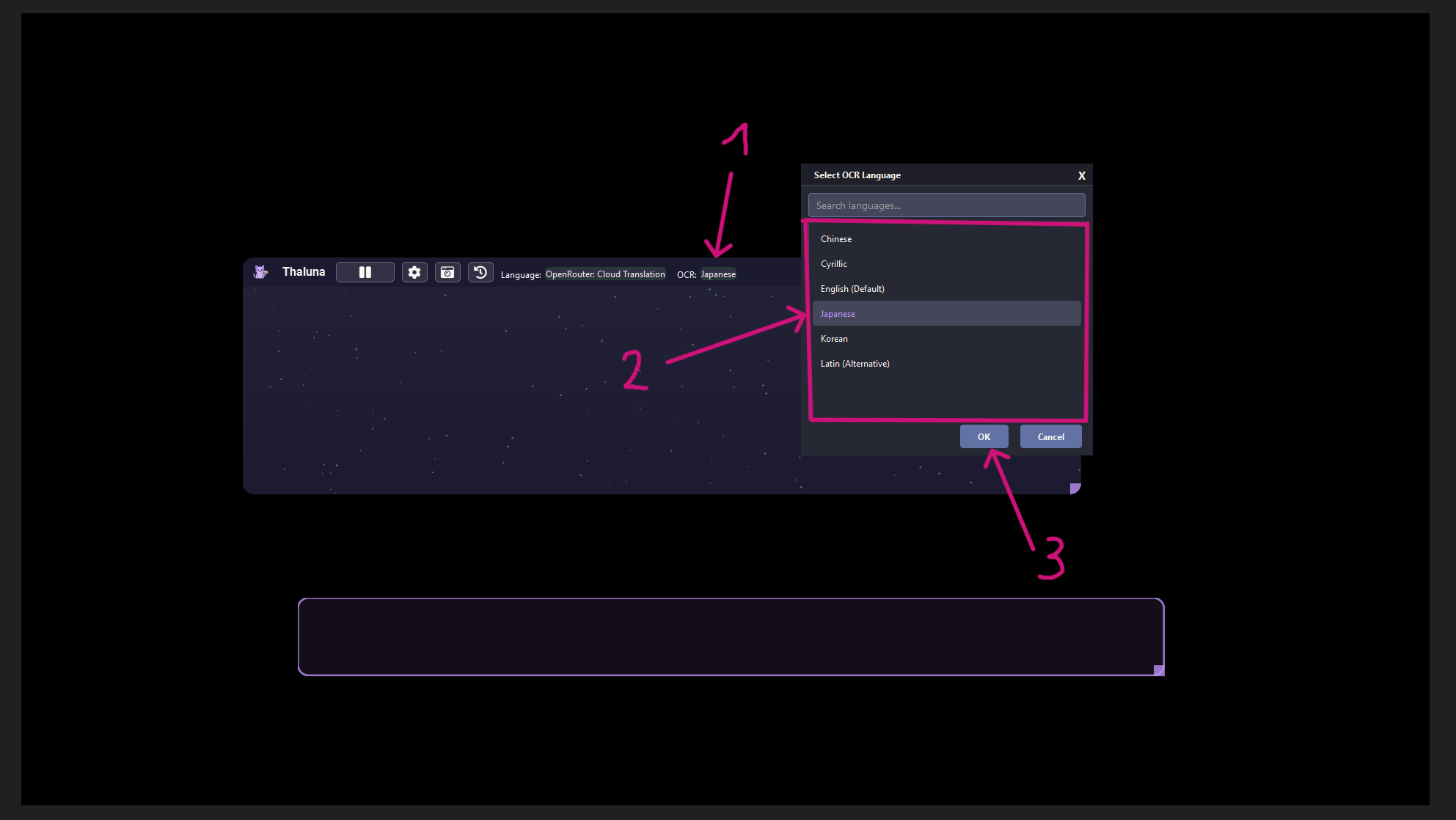

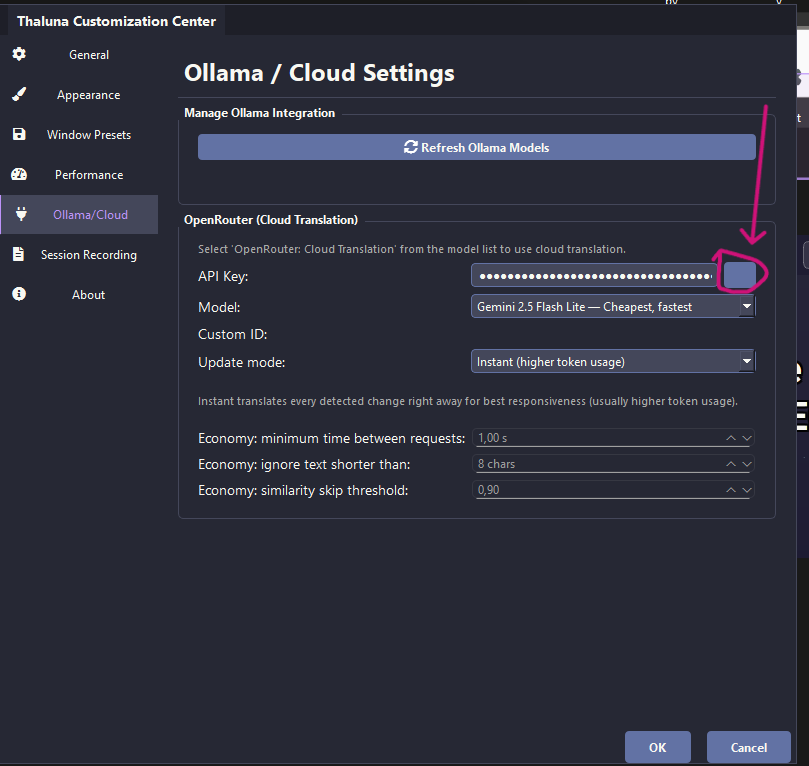

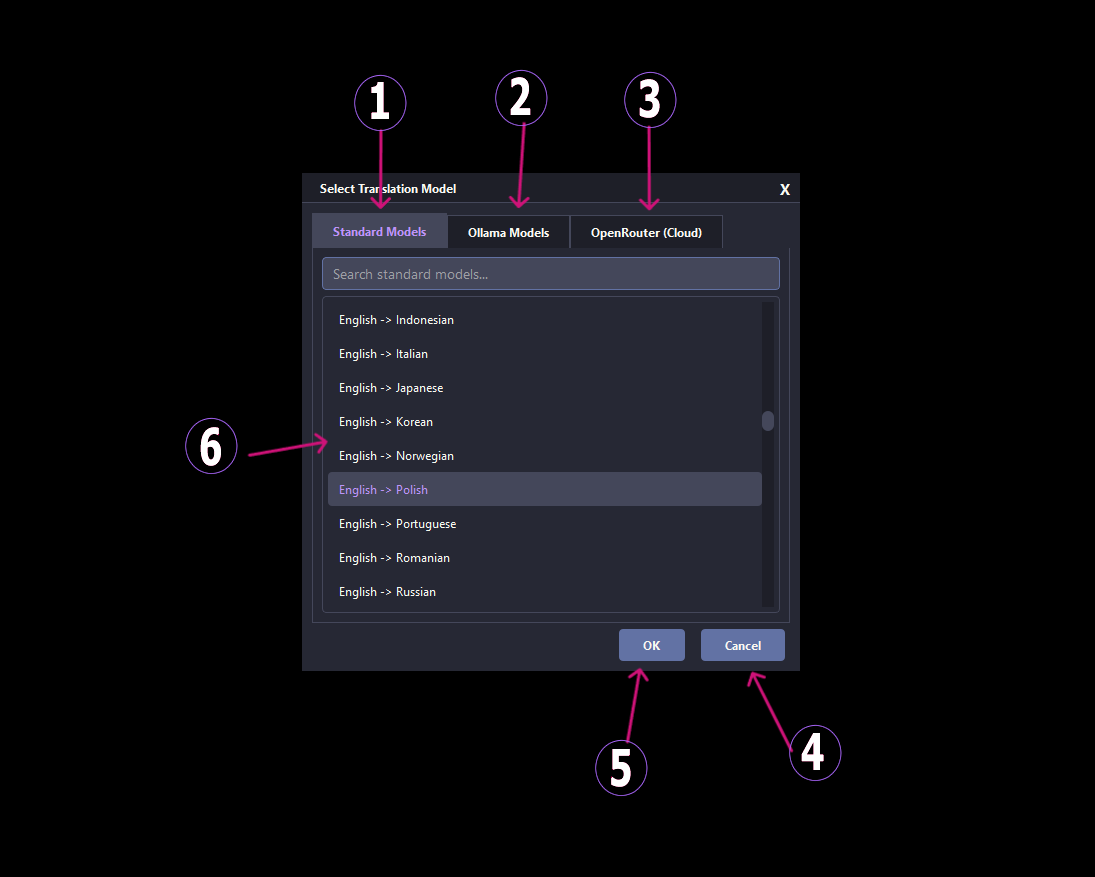

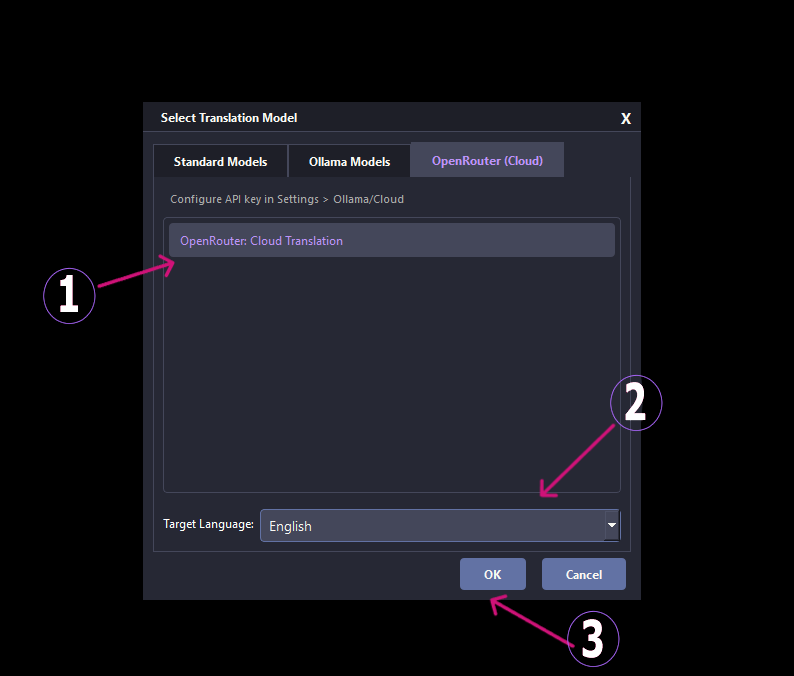

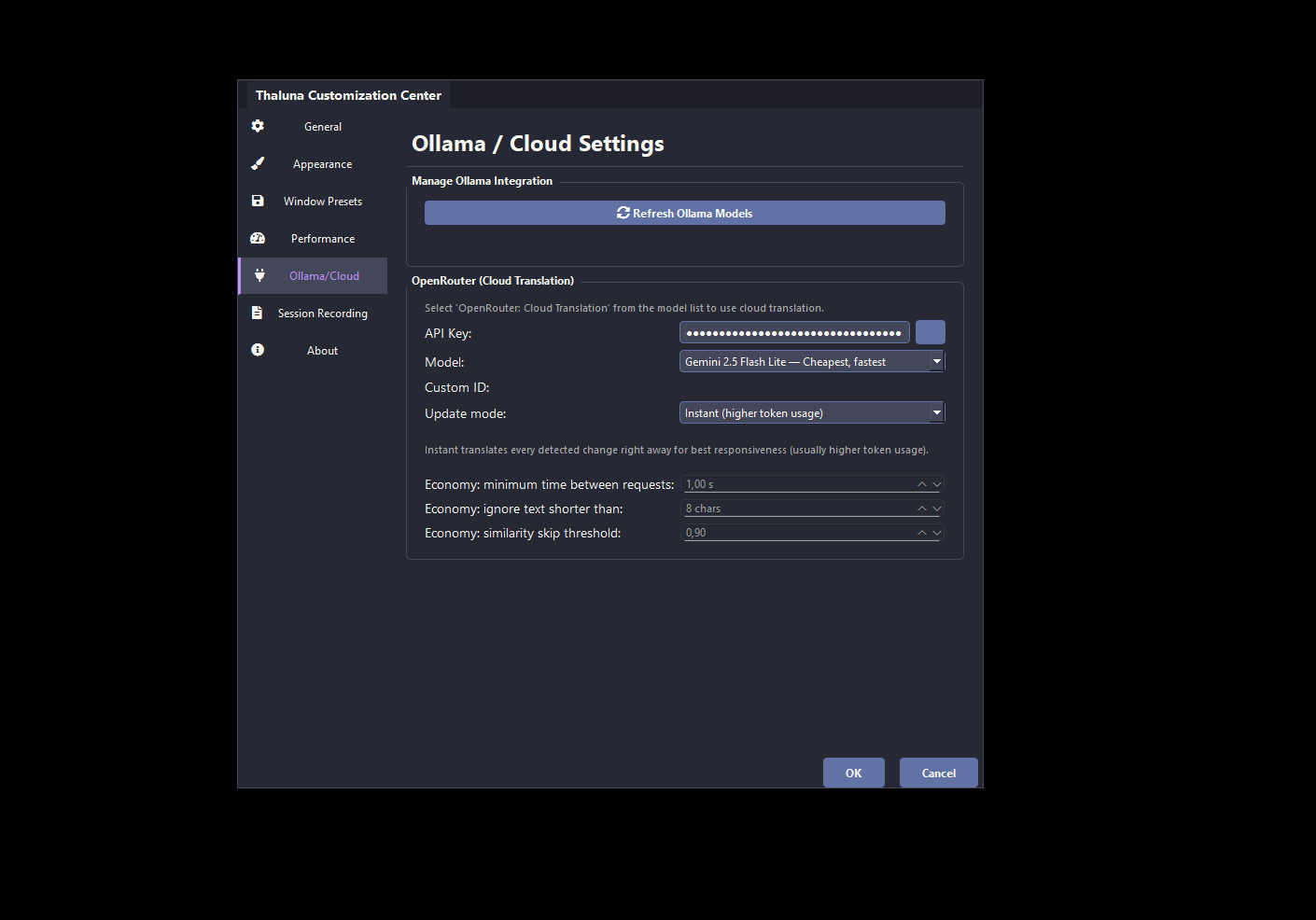

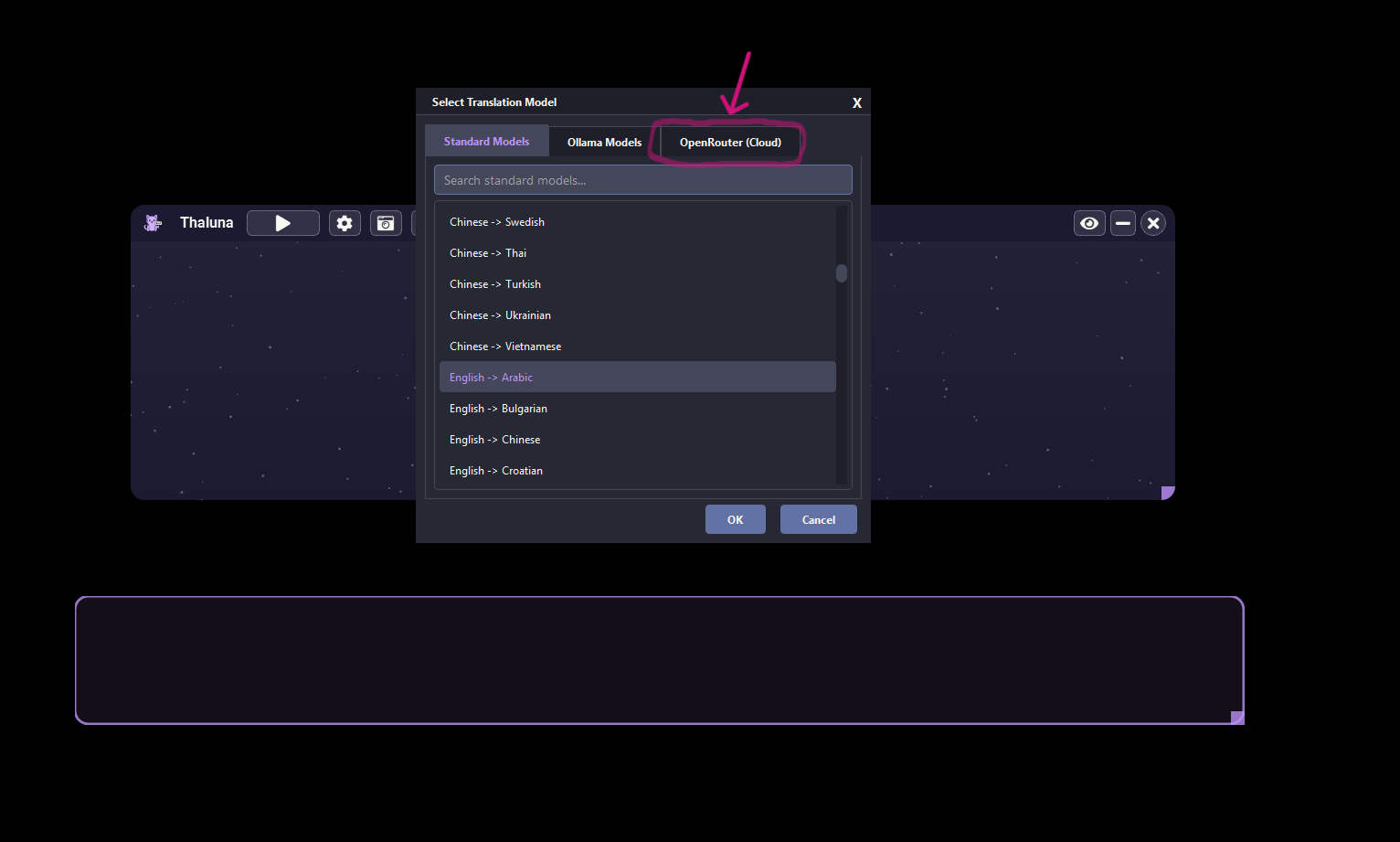

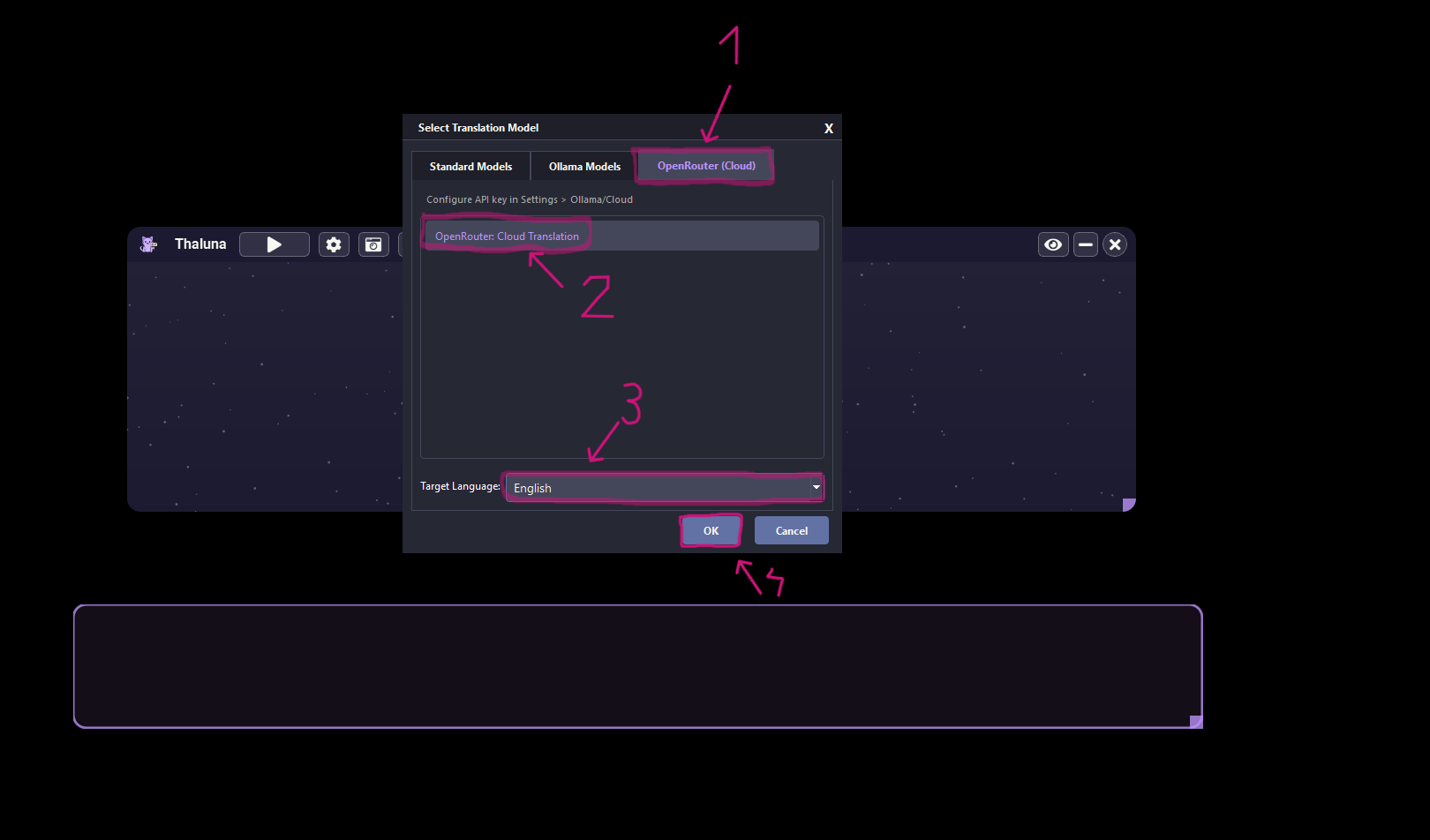

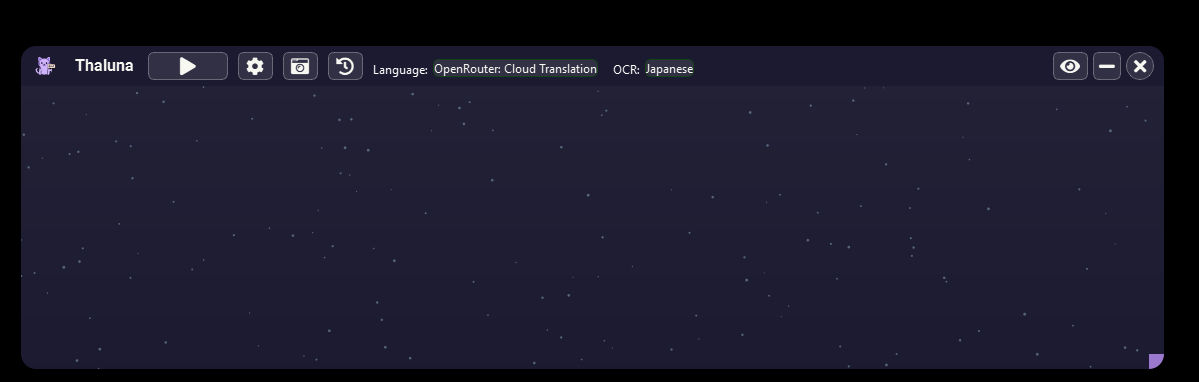

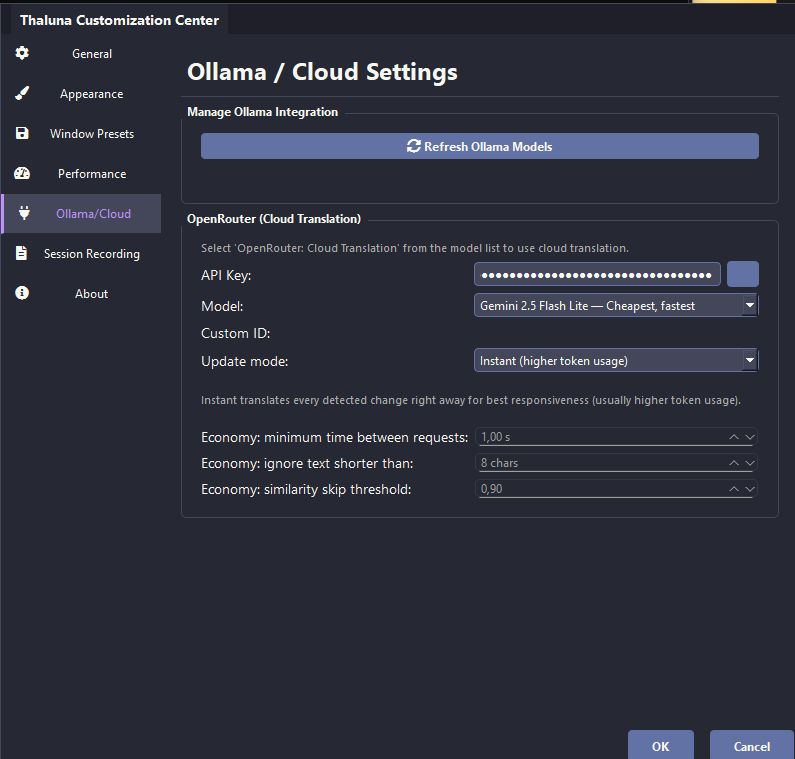

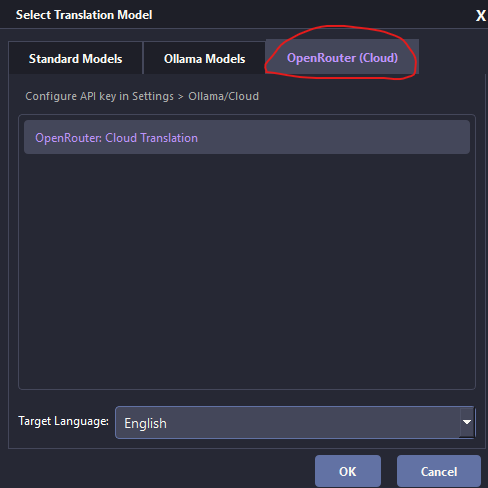

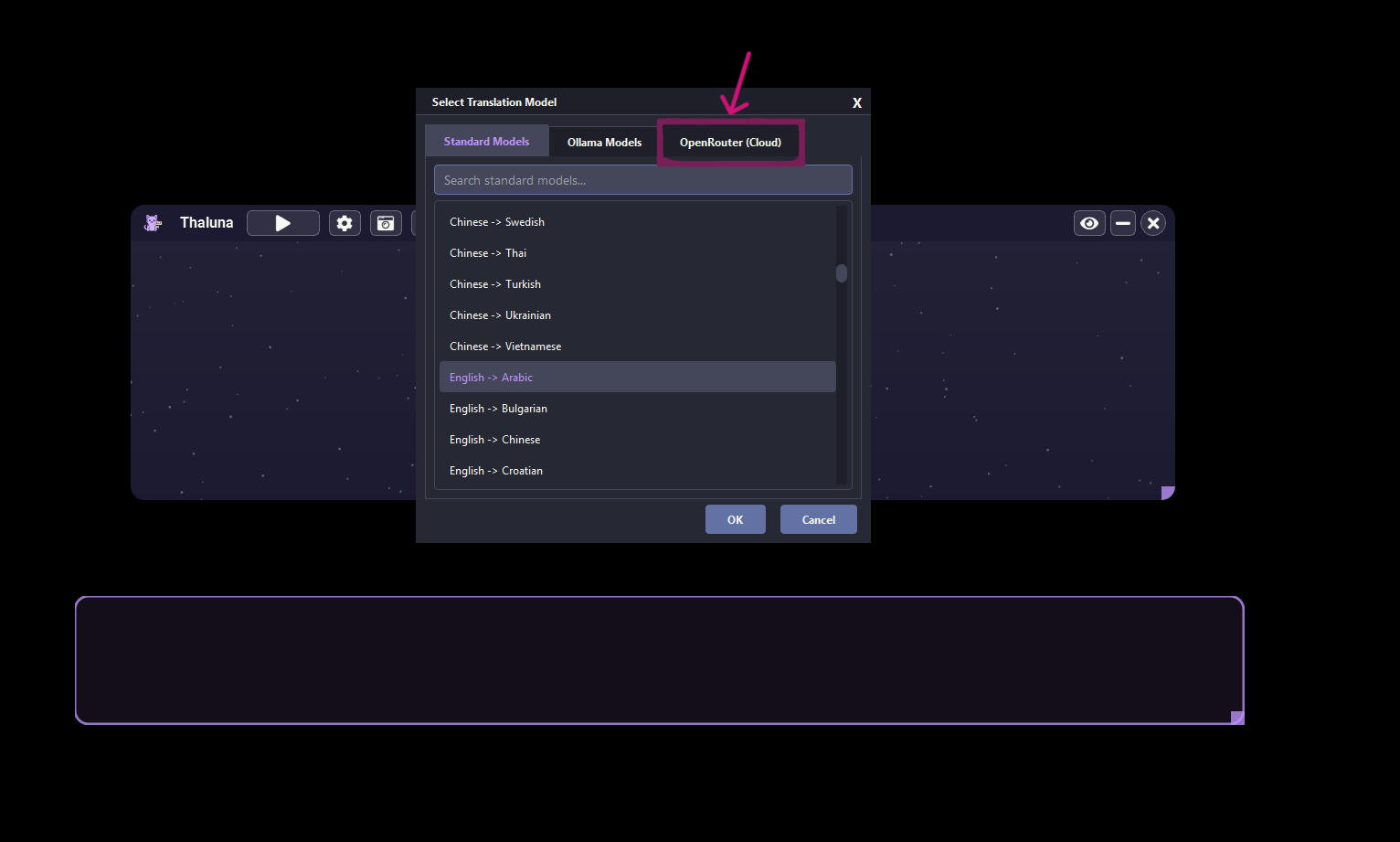

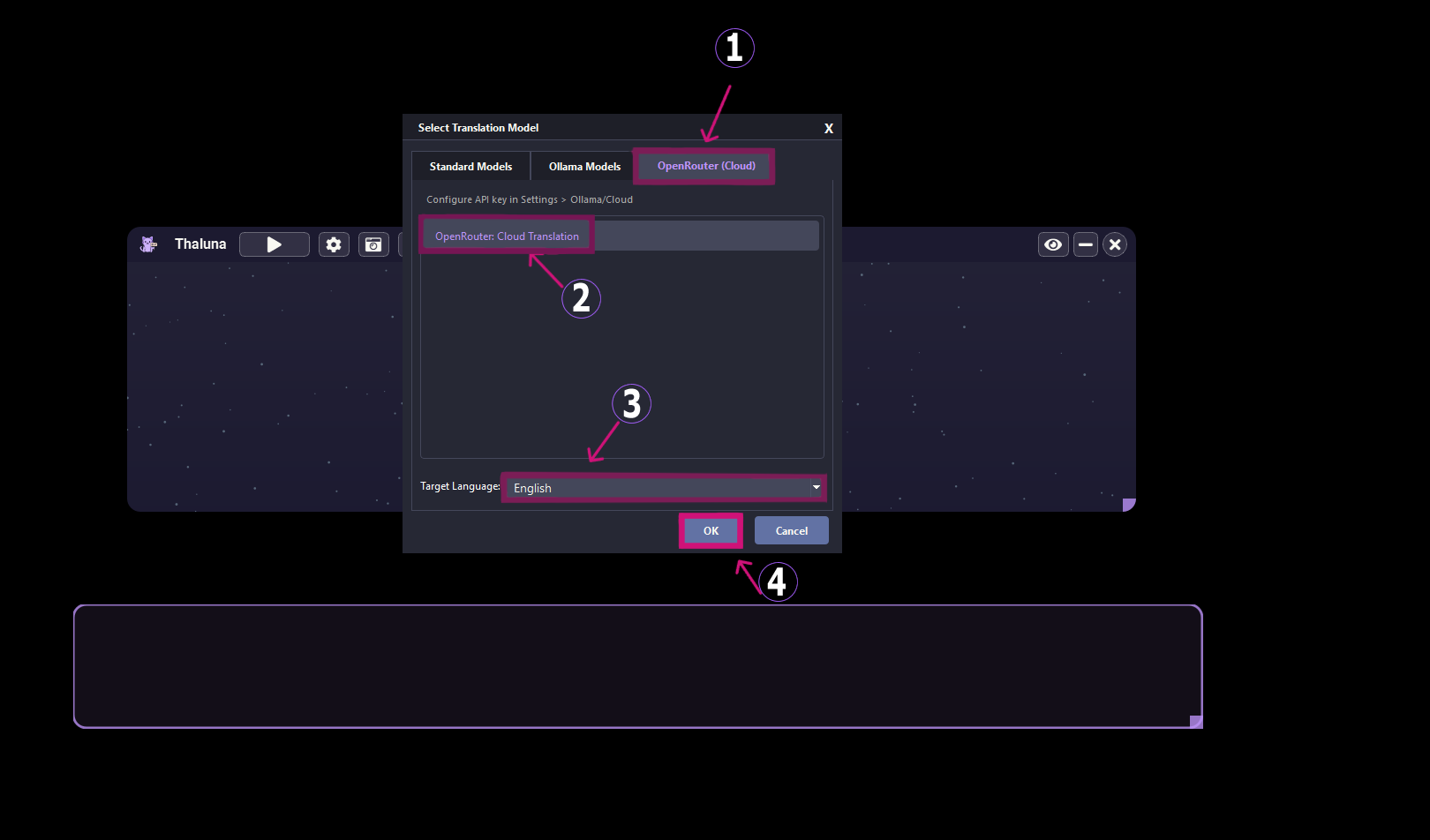

- Use OpenRouter for translation