According to Crystallize:

AVIF: Utilizes the AV1 video codec to achieve superior compression rates, resulting in file sizes up to 50% smaller than JPEG and 20-30% smaller than WebP for equivalent quality levels.

Might be worth looking into, though, should also check how well that avif is supported on the web.

Edit: Another quote:

AVIF is supported since: Chrome version 85, Edge version 121, Firefox version 93 and Safari version 16. bringing full support since 2024-01-26.

Edit2: Nevermind:

AVIF supports transparency for lossless images but doesn’t support transparency for lossy images. On the other hand, WebP is the only image format that supports RGB channel transparency for lossy images.

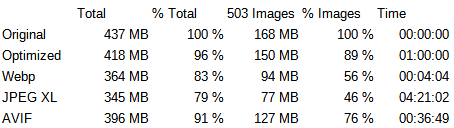

Looks like it won’t work for the use case. I wonder how well would JPEG XL have worked, if only Google didn’t murder it -_-

Edit3: Might still be worth considering for things like the backgrounds, where alpha is not needed?