Hello !

I've come across a niche feature that, to what I can find (without messing with STM's code myself), isn't available.

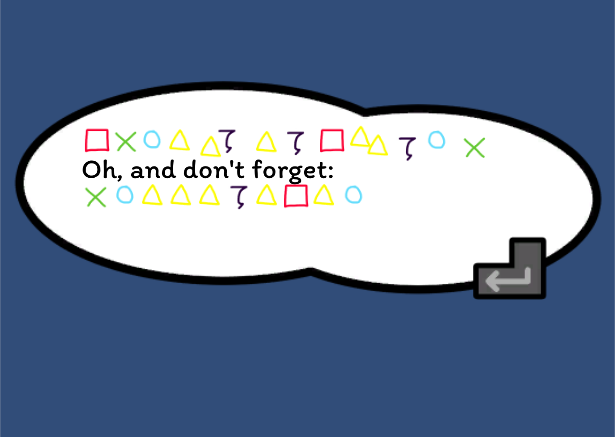

In <example game> I have certain glyphs that are displayed via quads (we can use the traditional triangle, circle, square, and cross shapes for this example). These glyphs are white (or black) so as to fit alongside other text. I would like to colour this quad-based text. This is where we run into the first issue: Quads cannot be coloured with a text effect colour in the text inspector. So even if I have "<c=red><q=cross><q=triangle><q=circle></c>" it will not render with a red cross, red triangle, or red circle. Similarly, it does not work for a single quad instead of a sequence of quads. And further it would not work with animated quads. Suggestion: Allow quad colours to be multiplied by a colour called in the text inspector.(Quad colours can be multiplied in the text inspector by tagging the quad asset as "Silhouette" in the asset inspector; thanks Kai !)

Well, maybe we can't call them in the text inspector, but how about in the asset itself ? Since these glyphs are just shapes, maybe we can colour them in the asset itself? I am willing to make a bunch of assets just for those shape-glyphs. Unfortunately, STM doesn't support colour for a texture in the asset either. Simply, even if a texture can be assigned to an STMQuad, an additional colour cannot be defined by it. This means that in order to change the colour of a glyph, I would have to render it in that exact colour and for any change I would later make I have to re-render that image with the updated colour. What a hassle ! Suggestion: Allow quad colours to be multiplied by a colour in the asset inspector.

(Quad colours cannot be determined by the asset, but pre-parsing can automate colour assignment; thanks Kai ! I learned something entirely new.)

Well, shoot. Maybe I can just export the glyphs in whatever colours I need and make a kind of "voice" using it ? If I can't colour them in the inspector or the asset, maybe I can have something that automatically draws them in those colours just based on what I type ! Alas, while STM does have a "voice" feature for audio clips, there doesn't appear to be an equivalent for quads. This is such a loss of potential ! You could effectively make your own hieroglyph system for NPCs or such with a system like that. Or hide secrets in plain sight ! Maybe even allow more creative freedom with text in a character map to allow something like animated text from images ! This could allow for more games to emphasise a "hand-drawn" feel or other atmospheric effects (tropically seen in "memory" or "dream" sequences in games as well). You could even combine this feature with existing audio 'voices' by assigning audio clips to each glyph variation as well. Suggestion: Create the ability to use a character map for text as a new kind of "voice" and allow for audio voices to read out those characters

(While originally referring to having colour assignments as part of a voice definition (similar to how STMSoundClips are done), pre-parsing will solve this issue. This also touched on having quads be audible, now a planned feature !)

And, well, maybe that last one is a bit of work. Not just for Kai, but for users as well ! Managing glyph variations and colours and audio read outs just for what could probably amount to only one NPC in an entire game using it ? What an edge case. Maybe in the end I should relegate these glyphs to a whole new custom font, something that for-sure works with colours and effects and audio readouts. I'd still have to manually type out each colour or effect for a character if I want them to be consistent (or even randomised). Maybe this could all be solved by a different approach. Maybe what we need is not the ability of a new voice feature, but an expansion on the current one. Voices already support defining text and effects for a 'voice'. How about instead we allow a voice to define what effects a character has ? We can already set up voices speak out a sound based on what character it is (though it comes at a loss that all other read outs of those characters, regardless of voice, will say them too). So why not extend that feature to quads and colours and fonts in and of themselves ? This makes text tool management that much more powerful. Setting up a voice to allow specific definitions for audio and/or glyphs and/or colour and/or colour per character based on what voice is being read out makes management of resources that much more easy to use and text that much more expressive. Suggestion: Allow STMAudioClips group, STMColours/STMGradients/STMMaterials, and/or STMQuads to be defined per-character in an STMVoices asset.

I think that is all for my over-the-top suggestions. I have several friends and seen many strangers long for abilities like this in text managers, especially coming from GML where character maps are used much more than fonts or fonts are converted to character maps. That along with basic colour features being absent from quads, some tools in this large and amazing asset seem to fall just short from "a master of its craft".

Lastly, I have an inquiry. In the past, I have had a folder separate from STM's 'Resources' folder where I would put my own STM assets, wherein they would not get read or recognised by STM. Does STM search project-wide for STM assets or does it only pull from its own folder ? While sample resources are always appreciated, other user-made resources can get lost in them and difficult to find without separate organisation. If this ability is not in STM, it would be greatly appreciated and mend together better with the rest of Unity.(Organisation of extraneous assets outside /Clavian can be done by creating another 'Resources' folder and putting assets in a folder named after the asset type; a little bit of a roundabout but just as effective !)

Thank you !