The game has been out for over a year now, and AI models have more data and have changed how their algorithms process that data.

Sadly, this isn't true for small creative writing focused LLMs. When I previously said something along the lines of more modern models handling Gareth differently, I was talking about Mistral Small 3.2 (June 2025) and DeepSeek V3 0324 (March 2025). If you're using Nemo, which I assume you are if you're complaining about personalities bleeding together, it is still the same old model the game has been using since day one. There has been an experiment with a finetune of Nemo, which is probably what made you complain about Gareth's personality being different, since it characterizes him similar to how Small and Deepseek do.

Nemo is a model from July 2024, and nothing has beaten it at creative writing at the 10 GB VRAM footprint since then. A large amount of things related to the harness the model has to work inside of have been improved, so it might feel like it's not the same model as back then, but it is.

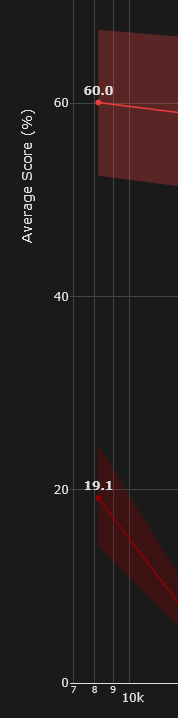

For reference, the bottom line is the chance of Nemo correctly retrieving information from a 6,000 word corpus, and the top line is the same for Mistral Small. This affects everything, from recalling the NPCs personality to remembering that it already pestered you with the same question twice.

You really should not expect brilliant memory or output variety from it. I highly recommend you try a fresh save file with DeepSeek. I'm confident you will be blown away by how varied the characters can be.