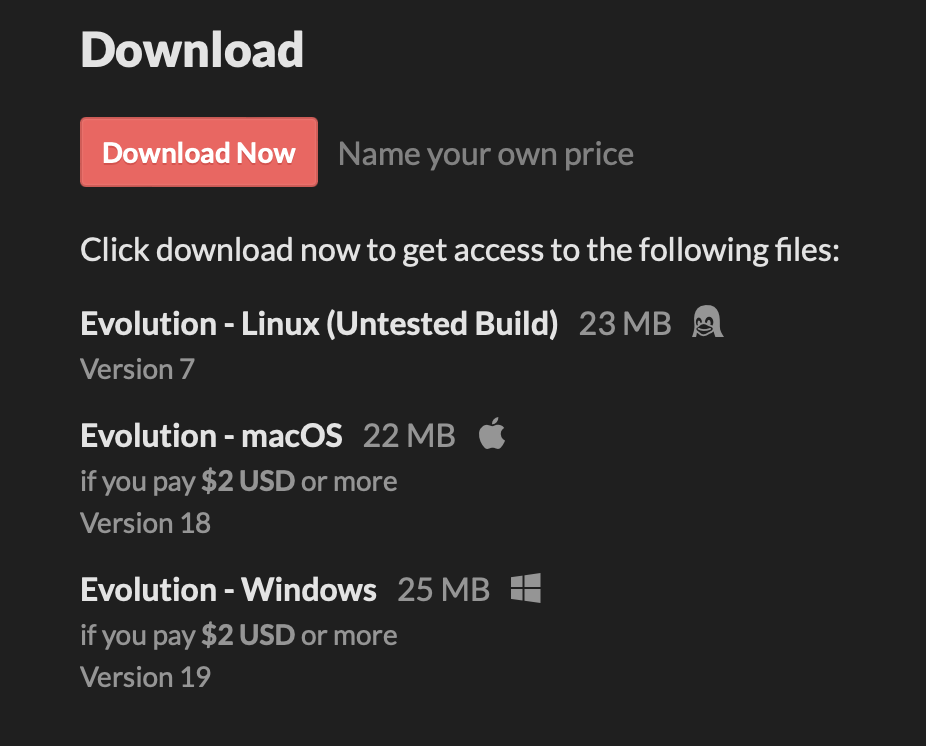

Hi!

You can find the data such as creature saves, simulation saves and gallery saves here:

C:\Users\<your_username>\AppData\LocalLow\Keiwan Donyagard\Evolution\

Unity stores player preference data in the registry on Windows. You can find and delete the values for Evolution in the Windows Registry Editor under

Computer\HKEY_CURRENT_USER\Software\Unity\UnityEditor\Keiwan Donyagard\Evolution

The fact that you can't choose the resolution at startup any more is purely because that was a Unity feature which they just removed completely in their newer versions. Since the v4 update of Evolution, you can now go to the settings under the "General" tab and switch "Window Mode" between "Windowed" and "Fullscreen". In Windowed mode, you can now resize the window to your liking.