just found this from 2004...might be of interest

catalystman

Recent community posts

Just some feedback on current playability... I find the ++ render distance is best by far......I can kill the first 5 NPC's and get my self a shot gun, but i cant find my way out of the first chamber.

Some people are commenting that they get head aches or eye strain...for myself it is quite the opposite, I would much prefer to view in the parallel mode, i find it far less straining on the eyes.

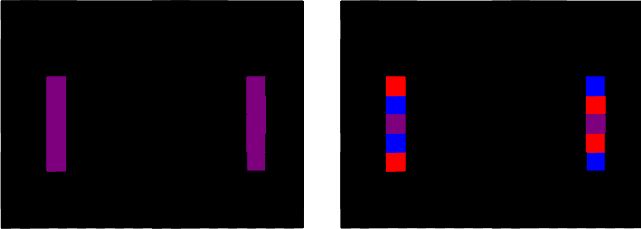

This is how you apply colour, the paradigm shift comes when you realise consecutive frames can be use to "stabilize" the autostereogram

On the left is a single colour stereogram. RGB (0.5, 0, 0.5)

On the right is the same stereogram but it's colour is mainly distributed between left and right. RGB (1,0,0) & (0, 0, 1)...(i have included 1 matched pixel to help with alignment)

In the distributed stereogram your eyes will fight for dominance but if you swap the location of left and right on each consecutive frame you will negate this....we used a simple gif animator to test this. But if you stay very still and stare long enough you will see the effect.

The trick would be to distribute the colour in such a way that the image can't be seen(well) outside of the parallel and crossed modes.

You have obviously chosen the depth of field and DPI values carefully, if you can cycle through all the values that give a good separation you may approximate a 3D scene

You could have a seperation of half a pixel, it's just this pixel takes on 2 values over 2 consecutive frames.........

To this end you could have any fraction of a pixel within reason of frame rate, bit depth and human perception...

okay i understand, so it converts to 4 bit grey scale..thus 16 depths.......so it needs to convert it to 8 bit, thus 256 depths...but grab every 16th layer on one frame, then shift forward one bit and grab every 16th +1 depth on the next....16th +2 on the next..and so on...that seems relatively simple....that means each 256 depths will be displayed about 4 time every second.....that sounds perfect....could implement fibonacci later but keep it simple for now i suppose.

The stereogram consists of essentially 2 images constructed of noise that has been shifted...distribute the RGB colour across each image and across frames(across space and time) so say you want a pixel to be purple..the left and right corresponding 2x5 pixel groups might be a collection of red and blue, (#FF0000 and #0000FF) in a random distribution across the screen and across sequential frames...

The big improvement will come if you can manage to implement the depth shifting which i feel comes hand in hand with the pixel shifting...this will give you full screen resolution and infinite depth......and it negates aliasing.

Might sound hard to believe, but I had this very idea about 12 months ago so I've had some time to think about this. But we were to use idtech4 BFG and integrate other c+ physics simulators to develop and train A.I. Our principal programmer had R.L issues so we never got around to really starting. I hope you appreciate what you are about to unleash....turning every 2D screen into a 3D puppet show, the games will be one thing....the applications in real-time medical imaging.....well...let's just say you're a pioneer sir, and it's a real honor to greet you...

From what i can see you are using a 3x3 as a pixel....if you shift the 3x3 each frame by 2 pixels in both x and y you can achieve an effective "screen resolution". FYI 2/3 is a Fibonacci ratio... but as mentioned you will benefit from an aspect ratio longer in the vertical. Perhaps a 2Hx5V with the pixel shifting 3H and 8V each frame(again this is using Fibonacci)

I can see you are using 4? colours in your random colour...if you use 3(RGB) you should be able to implement full colour. Just split your actual colour "randomly"(phi) across both images in a "randomly" cycling manner...giving an average of the actual colour.

Full colour and full screen resolution are the easier bit.

Infinite depth is possible when each frame consists of part of an image from each depth.

At the moment you are displaying 16? depths on each frame...so display 16 shifting depths..... shift the depth of each of those depths by a fraction of 0.618(phi) of the space between each depth but only render part of each depth in a "random" distributed cycle across the screen.. so each 2x5 pixel on one frame either side of the screen has a partner(or at most a partner in the frame before of after it), but each adjacent 2x5 pixel will likely represent a different depth and each pixel on the screen is likely changing on each frame..... this should give you your "infinite" depth auto stereogram. ( limited only by the screen resolution)

Phi is irrational and will be impossible to work with so using the rational Fibonacci's will make this possible :

0, 1, 2, 3, 5, 8, 13, 21, 34, 55, 89, 144, 233, 377, 610, 987, 1597, 2584,

I have been consulting with a colleague and very good friend about this, a neuroscience specializing in vision science.... you will gain a massive improvement in a persons susceptibility and vision stability by making the random "dot" aspect ratio longer in the vertical, so it becomes a random "strip" autostereogram"(e.g. 1 pixel wide x 5 pixel high)...there may also be ways to optimize the "randomness" using the irrational "Phi" in whatever algorithm you are using....we have discussed ways to make the image full colour with alternating and mixed RGB between both images, frame rate will be limiting... looking so much forward to seeing this develop.....