This jam is now over. It ran from 2022-12-16 17:00:00 to 2022-12-18 17:15:00. View results

🧑🔬 Join us for this month's alignment jam! Get answers to all your questions in this FAQ. See all the starter resources here. Go to the Discord (important) and GatherTown here.

Compete to find the best ways to test what AIs do, how fair they are and how easy they are to fool!

This month's alignment jam is about TESTING THE AI! Join to find the most interesting ways to test what AIs do, how fair they are, how easy they are to fool, how conscious they become (maybe?), and so much more.

We provide you with some State-of-the-Art resources to test all the newest models and you can focus on neural network verification, creating fascinating datasets, interesting reinforcement learning agents, deep Transformer interpretable feature extractions, safe RL agents in a choose-your-own-adventure games and red teaming the heck out of current models.

Schedule

- Friday: We start with an introduction to the starter resources and a talk by an expert in the field

- Friday until Sunday: Hacking away on interesting research projects!

- Sunday: Time for community judging and later, the winners' ceremony

Where can I join?

How do I participate?

Create a user on the this itch.io website and click Join jam. We will assume that you are going to participate and ask you to please cancel if you won't be part of the hackathon.

You submit by following the instructions on the Alignment Jam page.

Everyone will help rate the submissions together on a set of criteria that we ask everyone to follow. You receive 5 projects that you have to rate during the 4 hours of judging before you can judge specific projects (so we avoid selectivity in the judging).

Each submission will be evaluated on the criteria below:

| Criterion | Description |

|---|---|

| Safety | How good are your arguments for how this result informs the longterm alignment and understanding of neural networks? How informative is the results for the field of ML and AI safety in general? |

| Benchmark | How informative is this as a benchmark for AI or ML systems? Does it inform the field for benchmarks on AI? |

| Novelty | Have the results not been seen before and are they surprising compared to what we expect? |

| Generality | Do your research results show a generalization of your hypothesis? E.g. if you expect language models to overvalue evidence in the prompt compared to in its training data, do you test more than just one or two different prompts and do proper interpretability analysis of the network? |

| Reproducibility | Are we able to easily reproduce the research and do we expect the results to reproduce? A high score here might be a high Generality and a well-documented Github repository that reruns all experiments. |

Inspiration

Check out the continually updated inspirations and resources page on the Alignment Jam website here. See also continually updated ideas for projects on aisi.ai.

- See and add to a bunch of alignment benchmarks: Benchmarks

- <a href="https://arxiv.org/abs/2202.03286" ]<2202.03286]="" red="" teaming="" language="" models="" with="" models<="" a="">Using LLMs to assist human-written critiques: </a>AI-Written Critiques Help Humans Notice Flaws

- Adaptive Testing: Adaptive Testing and Debugging of NLP Models and Polyjuice: Generating Counterfactuals for Explaining, Evaluating, and Improving Models

- CheckList, a dataset to test models: Beyond Accuracy: Behavioral Testing of NLP Models with CheckList

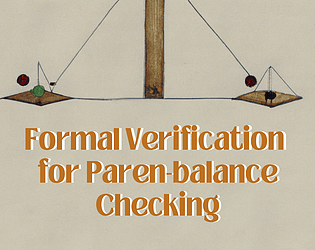

- The neural network verification book

- See project ideas for this hackathon

- Filling gaps in trustworthy development of AI | Science

- Making machine learning trustworthy | Science

Submissions(9)

No submissions match your filter