Token Game Jam 1

Token Game Jam 1 is a game jam about making games with generative AI.

Use AI for art, audio, writing, code, ideation, animation, live generation, or anything else that helps your project. Heavy use is fine. Light use is fine. What matters is that it plays a real role in the work, and that you explain how you used it.

This jam is open to solo developers, teams, first-timers, and veterans alike. Join our Discord at https://discord.gg/8FhsxEnadv

Dates

|

Rewards1st place: $50 PayPal |

Rules

- Solo developers and teams are both welcome.

- You may contribute to more than one submission.

- You may submit more than one game.

- Games must be safe for work and no more than teen-rated.

- Your project must use generative AI in a meaningful way.

- Your submission should mention how AI was used (e.g., for coding, for image generation, etc.).

- The game should be made entirely, or mostly, during the jam.

- Games may be in any language.

- Web-playable is recommended, but not required.

- You keep full ownership of your work.

- Treat other participants with respect.

What Counts as AI Use?

There’s no single right way to do this. You might use AI to generate visual assets, help with programming, sketch out story ideas, build dialogue, create sound or music, or power a system inside the game itself. You might use it throughout the project, or in one key part of the pipeline.

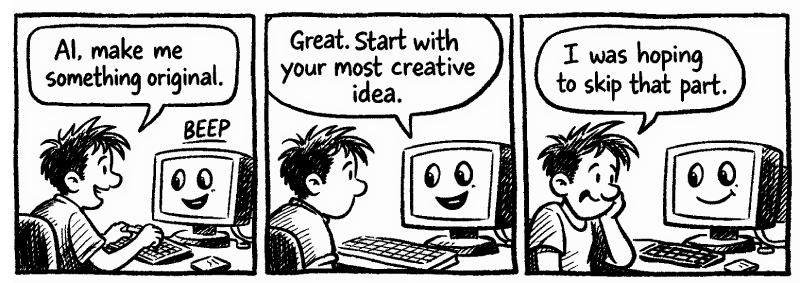

This jam is not about using as much AI as possible; it’s about using it well. Generative AI is a diverse and powerful technology that takes skill and knowledge to use well. If you want to stand out, go beyond "slop" and make something original and genuinely enjoyable to play.

Teaming Up and Asking Questions

Need teammates? Want feedback? Not sure how to approach the jam?

Use the community page:

https://itch.io/jam/415834/community

Or join the Discord:

https://discord.gg/8FhsxEnadv

If you’re new to AI tools or game development, Discord is the best place to ask questions and get unstuck.

Ready to Build Something Awesome?

This is your chance to dive in, experiment boldly, and show what generative AI can really do when paired with human creativity. Whether you're a solo dev throwing together your first AI-powered prototype, a seasoned team pushing the boundaries of live generation, or someone who's never touched a game jam before,Token Game Jam wants you.

The bar is simple but exciting: use AI meaningfully, explain your process, and make a game that's actually fun to play. No perfection required, just genuine effort and creativity. The two-week window gives you plenty of time to iterate, surprise yourself, and create something original.

So what are you waiting for? Mark your calendar, gather your ideas (or let AI help spark them), and join the jam on May 15th!

Contact

Any questions or comments? Contact the jam organizer, Coeurnix: https://coeurnix.itch.io/

New to Generative AI? A Guide to Getting Started

Why Now?

We are living in a very special time. Thanks to numerous recent advancements in hardware and software, generative AI is now capable of doing some extremely cool things with mindblowing speed. While there are many parallels with past technological breakthroughs, the world has never seen anything quite like gen AI. The key to this is emergence, where certain latent possibilities only become visible once a level of complexity (i.e. size, connections, speed, etc.) is reached. For example, if you display one photograph a second it is a montage or flipbook, but once the speed you are showing reaches around 15 frames per second, it "becomes" something completely different, a video.

We've been watching generative AI upgrade its possibilities continually over the past few years and seen that, at a certain level of complexity, a generative AI text model becomes a thinker with nuance and imagination, an image model becomes an artist creating original works it was never trained on, a video model becomes a "world model" with an implicit understanding of the physics of space and time, an agent model becomes a genuinely helpful and efficient coworker, and so on. Pessimistic predictions of hitting a "wall" preventing further improvements have not materialized, and flaws like the "strawberry problem" or poorly rendered fingers are constantly being resolved. What is possible is now is simply astounding, and the future is bright!

Reasonable Expectations

Even though the possibilities are magical, it's very important to keep reasonable expectations as a game dev. You should expect that, even though it makes work easier and faster, generative AI is quite complex in its own right. Not only are there many concepts and tools to master, they are changing and evolving extremely quickly. Although the fundamentals are fairly stable, maximizing the quality and productivity for a specific model or tool requires experimentation and learning.

For example, early chat bots had very limited "context" (short-term memory during a conversation). This gave rise to many tips and tricks like starting new conversations for every question or using complicated RAG (Retrieval Augmented Generation) systems, where a database of information is queried to selectively supplement a conversation. Now, however, context is much larger and more effectively understood, meaning getting the most out of chatbot can mean giving it a "dump" of lots of information and then querying it. However, exactly how much information, what order it should be in, how it should be formatted, etc. all vary.

You should expect that learning how to use generative AI effectively will take some time and practice. Furthermore, it is not "settled science." Generative AI uses fundamentally complex systems far beyond the algorithmic prediction abilities of humans, unlike programming source code or math, for example. Hence, the idea of "vibes" where experience-informed intuition allows a kind of higher operating level than deductive reasoning.

Another way to look at this is that you'll need to find what works for you for your purposes, which might not (and probably won't) be exactly what worked for someone else; the days of "prompt cookbooks" are largely past. That said, we can all learn from each other and the community collectively discovering effective ways can be one of the most fun aspects of generative AI. Given the continual expansion of new models and their possibilities, we're all new to this, even veteran employees of AI companies!

The other expectation you should have is that, if what you are doing takes no effort or originality from you, then the perceived value of it will be relatively low. Yes, if we could time travel to before generative AI took off and then showed what we made with a one-shot prompt like "make a Space Invaders game" or an image like "robot girl", sure, it would impress people for a while. But after you did 100 slop prompts like those, even in the past many people would quickly become unimpressed with a one trick pony.

Without further inspiration, generative AI becomes very "samey" as a given model will largely have the same output with the same input. This is a "good" thing as the consistency can be helpful for many uses (for example, categorizing images into "hot dog" or "not hot dog"), but it means that, for creative work, YOU need to be creative. Just like any creative art form, this means an interaction between you, your vision, and the tools you use. Get to know your tools, push their limits, and see if you can bring your vision to life!

Free/Cheap Tools You Can Use

Note: this does not include dedicated paid AI game dev tools and websites because those subscriptions and tools are typically built on less expensive core services which are constantly improving; if you use the core services/tools, you'll usually save money, keep up with the latest developments, and have the most flexibility.

Ideation, Writing, and Translation

LLM (Large Language Models) have become extremely useful for text generation, brainstorming, reviewing/editing, translating, and other text tasks. Most people access them through a web chat interface, where they can easily ask the model questions or otherwise converse with it. Modern models typically can search the web in response to questions, making them fantastic research tools. LLMs accept system prompts or early instructions that affect later responses. For example, you can instruct an LLM to respond with a specific writing style or as a specific character, allowing for considerable creativity so that text doesn't sound like the typical LLM.

Good free LLMs:

- ChatGPT: https://chatgpt.com

- Claude: https://claude.ai

- Gemini: https://gemini.google.com

- Grok: https://grok.com

- DeepSeek: https://chat.deepseek.com

- Qwen: https://chat.qwen.ai

- Kimi: https://kimi.ai

- Z.ai: https://chat.z.ai

- Mistral: https://chat.mistral.ai

Want to try lots of different models easily? OpenRouter https://openrouter.ai/ allows you to do so and they almost always have some free models available: https://openrouter.ai/models?max_price=0&order=most-popular

Local LLMs:

If you have strong graphics card such as a recent NVIDIA card with 8+ GB of VRAM, a recent Mac with plenty of RAM, or don't mind waiting a bit for a response, you can also run LLMs yourself. This is a fairly complex undertaking, but gives you complete control, free and unlimited use, and total privacy. You'll need a program to create responses (i.e. inference) and a model. A good program for inference is llama.cpp (https://github.com/ggml-org/llama.cpp) and the typical place to download models is Hugging Face (https://huggingface.co/models). Some good models to start with are the Qwen 3.5 and Gemma 4 families. Note that models are typically many gigabytes in size (though quantization helps shrink them; q4_k_m is a good choice), you'll want a model that "fits" within your memory fairly well, and responses are usually slower than using an API or online chatbot as these are often served using specialized processors costing tens of thousands of dollars.

For more information, check out the LocalLLaMA subreddit at https://www.reddit.com/r/LocalLLaMA/

Programming

Generally, there are three ways to use generative AI for coding. All of these can be used for game dev, whether your goal is writing an original engine, using libraries like SDL or Phaser, or coding for engines like Unity, Godot, or Unreal.

1. Code with a Chat Bot

This is a very easy way to get started and works especially well with relatively small, focused tasks like creating a single-file script. The technique is simple: just ask an LLM chat bot like DeepSeek or GPT to create a script that does a specific task. You'll typically provide considerable detail, like what programming language and libraries to use, what features and constraints it should have, and so on. You may want to also ask it how exactly to use/run/test whatever it makes. If what it writes doesn't work (which is not unusual), you'll typically copy and paste the error or problem it is having and it'll adjust the code or otherwise help you solve it.

One improvement to just giving it a one-shot prompt is to start by dialoguing with the chat bot about what you'll need to do the task. Ask it what it thinks will work well, what might be very complicated or error prone, how to improve it, etc. Once you and the chat bot have figured out what it should do, then ask it to make the code.

2. IDE Assistants

While the chat bot approach works pretty well for simple needs, but often breaks down when the task is complex. Programmers often manage complexity already using IDEs (Integrated Development Environments), which make it easy to manage many interconnected files, run commands like building and testing, and do step-by-step debugging through code. Generative AI can be very helpful for programmers who use IDEs. One way is through AI-assisted code complete, where AI is continually examining your code and allows you, by pressing tab or similar, to automatically complete or modify code at your cursor. (This is very similar to older autocomplete like with Intellisense, but far more contextually intelligent.) You can also often highlight regions and then give it a custom command like to rewrite it in some way. At the cutting edge of IDE assistants are agents which will do more complex commands, like create entirely new files for you in the IDE.

3. Agents

Modern agents do not need an IDE. In fact, many work well just as a CLI (command-line interface) program (n.b., graphic wrappers for these CLIs are often available). The CLIs provide text interfaces where you can tell the agent exactly what you want done or changed, and they'll work independently to do it for you, reporting back on their progress ready for more work. The work done goes beyond editing files; agents are often masters of using command-line utilities like compilers, test runners, and more. More advanced agents can even do things like view and run the programs they are working on.

This is currently the cutting-edge of using generative AI for coding, where programmers often act more as project managers, creating detailed plans for their programs (often in collaboration with an LLM or agent), then having the agent do the direct work. The human programmer then will often review and manually test the system, proposing additions and changes to the agent. The process can accelerate development 10x or even 100x compared to traditional methods.

In addition to free agents that allow this kind of work like OpenCode (https://opencode.ai/), there are popular subscription services like Codex (https://developers.openai.com/codex -- free plan is limited, but provides generous use if you are an OpenAI subscriber) and Claude Code (https://code.claude.com/docs/en/overview).

Images

For many people, one of the most time-consuming and difficult aspects of modern game development is creating images. Even professional artists can find the time and style consistency demands challenging. Generative AI can be a massive help, making the generation of very high quality custom art available to anyone in seconds and for virtually zero cost.

However, bringing a specific vision to life with generative AI is often harder than expected. It's often easier to start by exploring what you can readily get it to create and adjust, then consider how you might use that in your game. On the other hand, advanced users and existing artists looking for new creative possibilities will find an almost limited palette to play with.

The two basic ways users create images with generative AI are text-to-image (T2I) and image-to-image (I2I). With text-to-image, you give a text prompt and the model creates an image that matches the prompt. Image-to-image adds one or more existing images to the mix, allowing you to do things like change the art style of an image, turn a primitive sketch into a watercolor, or make selective edits like adding a given character from one image to a scene in a second image. Exactly how complex prompts can be and what is possible in terms of consistency and features depends on the model you are using.

Google, OpenAI, and other companies often allow a limited number of free image generations, or you may want to consider using your own graphics card to generate images locally. Not only is this free, but the style and customization possibilities through software like Comfy (https://www.comfy.org/) are radically greater than with commercial services. The trade off is that generations are often much slower, setup is typically complex, and the best open source models lag state-of-the-art commercial ones by a generation or two. For more information about creating images locally, check out the StableDiffusion subreddit at https://www.reddit.com/r/StableDiffusion/

Video

Generative AI video has dramatically improved in recent years, allowing for very interesting possibilities in game dev for trailers, cinematics, animations, nonlinear video, backgrounds, dialogue clips, and other uses. There are numerous commercial providers with excellent models for under 10 cents per second of generated video, sometimes with audio included. In some cases, there are limited free trial generations available, such as through Kling, Midjourney, Luma, Wan, or other providers. However, if you have a strong graphics card, you can also generate your own videos for free using open source models like LTX and Wan. Comfy, mentioned below for Images, supports this, as do some free software applications.

Like with image generation, videos can be image-to-image, allowing for creative possibilities in connecting or looping videos. Some more specialized models are designed for adding lipsync, matching an existing image or video with a given audio or text-to-speech (TTS) script. SOTA models are highly directable, maintaining excellent character consistency and handling professional-looking cuts and transitions flawlessly. Even more than with image generation, video generation is developing rapidly and you can expect significant improvements throughout the year.

Audio

Generative AI for voice overs, narration, and other dialogue is quite good now, with generally convincing, directable models available at very low cost or for free locally. Some models support voice cloning, and others can produce convincing sound effects given a text prompt. What's more rare is song generation, which is mostly done currently with commercial models like those from Suno, ElevenLabs, and Google. The audio quality of these generated songs has become extremely good, although they often work better for some styles of music than others. More advanced models allow you to control sound production, including by "inspiring" songs with your own singing or instrumentation.

3D Models

Generative AI can create 3D models as well as custom textures. There are several commercial providers of 3D generation, as well as open source models that can be run locally. While image generation is competitive with professional artists, generating 3D models has not yet reached the level of skilled modelers and 3D animators. However, for many uses, such as static 3D props, it can be a massive time saver and texture generation is getting very good.

Runtime AI

If you are already experienced with AI and/or programming and want a challenge, you might want to consider including generative AI at runtime in your game. This is dangerous territory, as you must ensure that you do not include your API keys in your webapp or game binaries. If you do so, people will likely steal your API key and then use it for generating stuff on their own at your expense. The correct approach is to run your own service on a separate, secured service like a Cloudflare worker, for example, and then have that service talk to your AI provider. Even in that scenario, however, you will want to consider how to rate-limit or otherwise ensure that your AI pipeline is not co-opted, allowing someone to pretend to be the game client and use your API indirectly.

The safest way to do runtime generation, although also quite complex, is by using a local model. In this case, you'll have to find the right balance between models small enough to run quickly on your users' systems and smart enough for your purposes. Either way, runtime generation is quite complex, so if you’re planning to do runtime AI and aren’t sure how to set it up safely, ask in the Discord and we'll try to help.

Other

In addition to these approaches, there are further ones like full world generation models such as Marble (https://marble.worldlabs.ai/) or world simulators like Google's Genie (https://labs.google/projectgenie). In addition, you might find creative use from specialized models such as those for image analysis, sentiment detection, and so on. These are more general AI models and less generative, but often there is overlap. For example, modern LLMs are sometimes VLMs (Vision Language Models), allowing them to directly understand images (and sometimes other inputs like audio) just as they would understand text as prompts. The world of generative AI is constantly changing, growing and evolving as new ideas and possibilities are explored. It is, indeed, a very special time!